|

INTRO: I have dabbled twice in the stock market with very little success. One take away I got from my experience was that the stock market is not for the faint hearted, much like a day at the race track.. I figured that the stock market was gambling for rich people. I went in very naive and bet on companies I liked, no more analysis than that. My biggest win was a 10% increase in 24 hours and my biggest loss was 20% in 24 hours. There are loads of stock market celebrities out there, while living in Canada I frequently came across a guy on TV called "Jim Cramer", his show is called "Mad Money". On his show Jim makes the stock-market kinda fun, he bounces around the studio with what seems like a supply of endless comedic props, whilst people call him up for advice on what to buy and sell. So why am I talking about Jim? Well.. since I'm terrible of the stock market but pretty good with data, why not actually look at how successful a real stock market expert is? THE SETUP: They say most data analysis is 90% collection and preparation, that was 100% percent true in this case, but here's what I did:

Note: I'm no financial expert, I'm merely seeing if Jim has the chops! (is that a NZ saying?) THE TLDR: Summarized up, this is what I found from a high level:

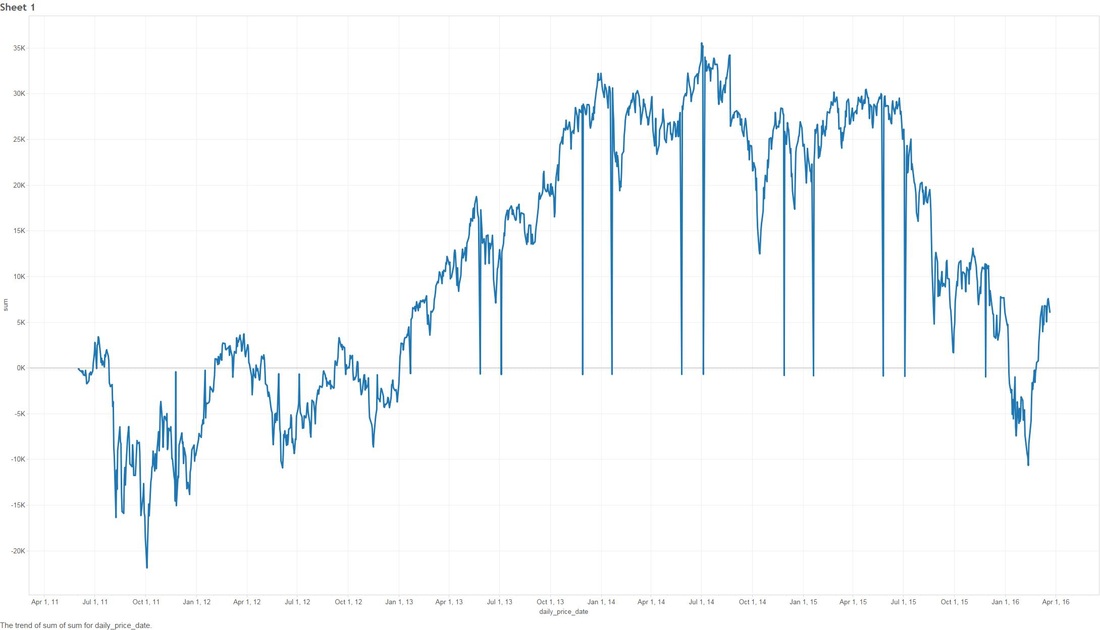

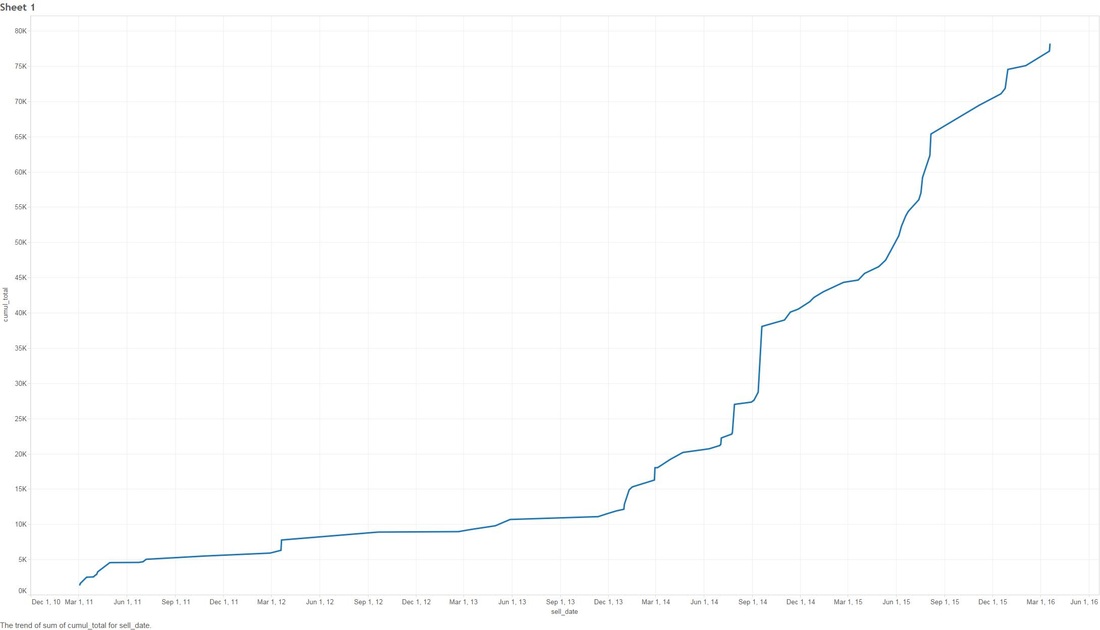

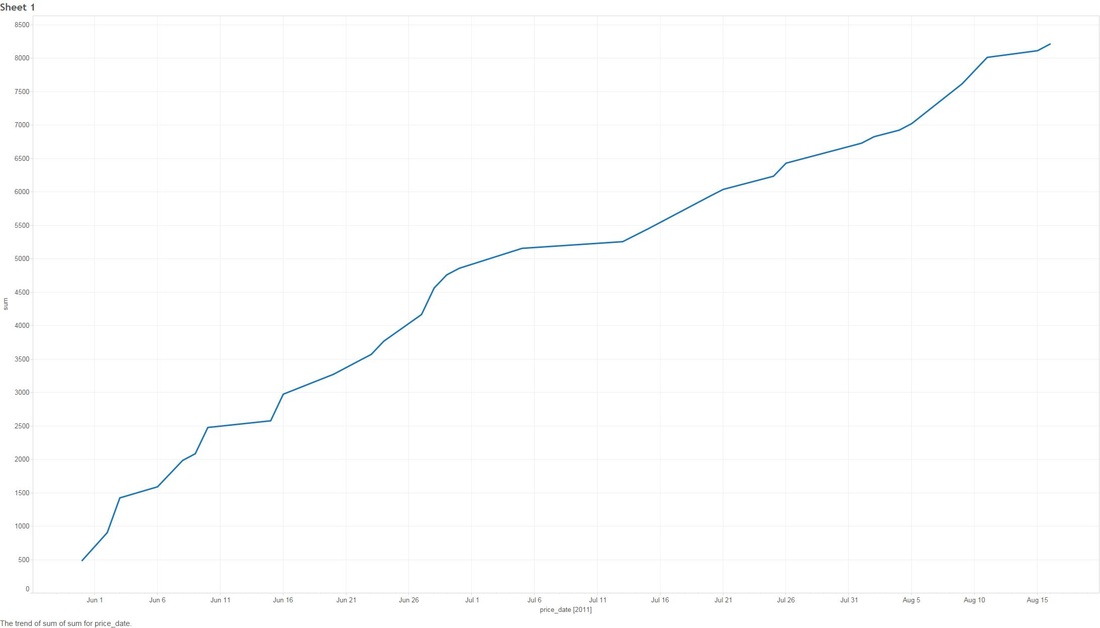

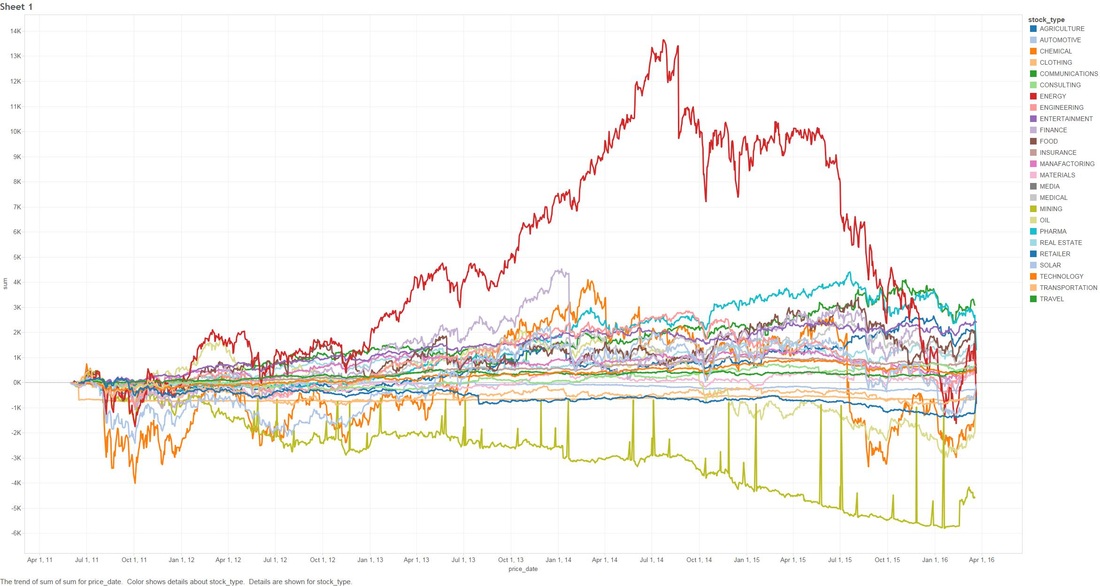

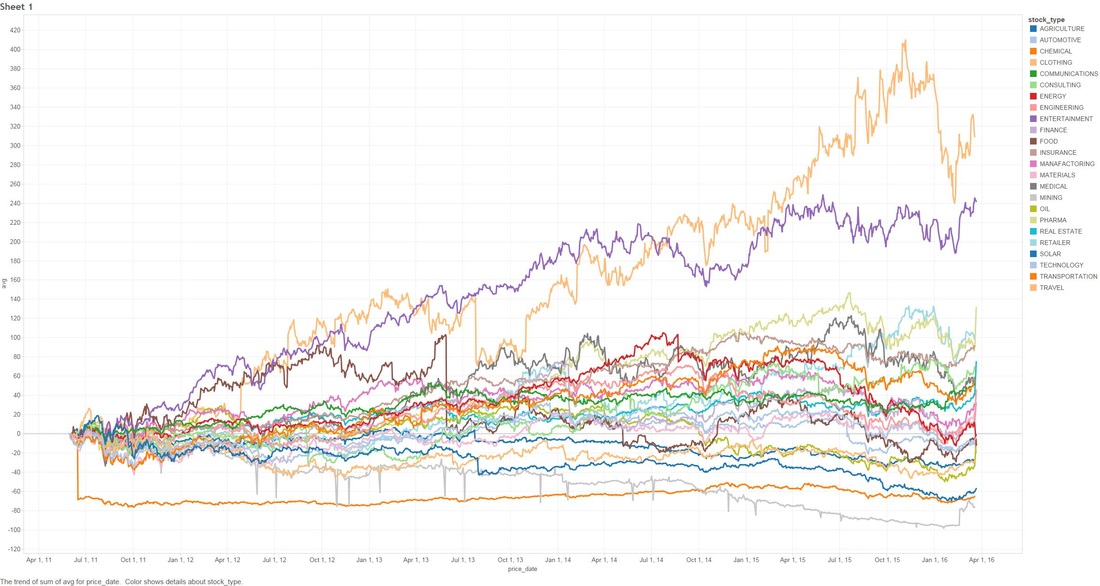

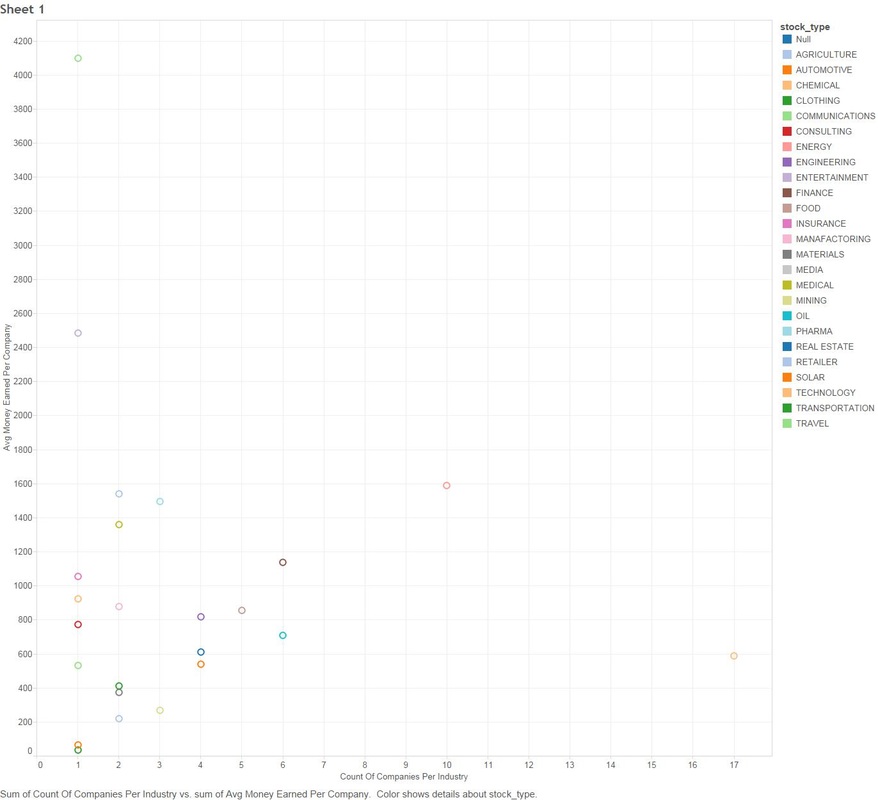

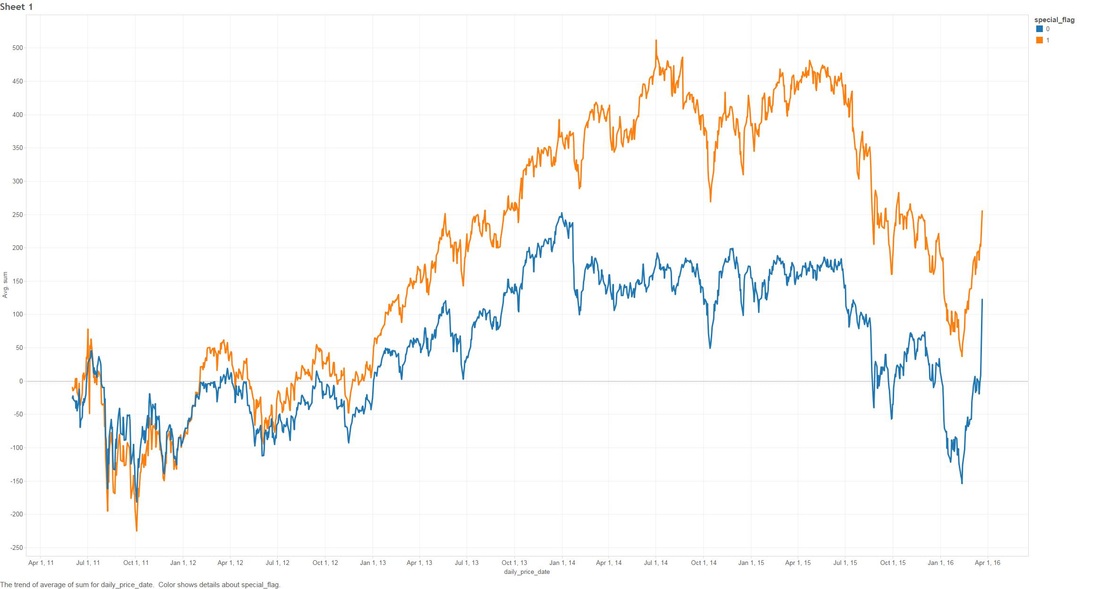

THE DEEPER DIVE: It's very useful to note that some of these analyses are based on "The stupid", "The Realistic" and "The Perfect" stock-market scenarios, please..please don't think that you as an everyday person can achieve "The Perfect" based on what I'm saying (otherwise I wouldn't write this blog and keep all the information to myself!) Jims Recommended Stock Value Over Time In the above scenario I have fictitiously purchased $1,000 worth of stock from each Bull (means buy) recommendation. Our highest point of earnings is the 1st of July, 2014 where our portfolio has gained ~35k in ~3 years. You might also see some points that dip to the zero line, this is where yahoo data still reports stock prices on American holidays but zeros them all out. The Perfect Buying $1000 worth of each recommended buy (bull), then selling them at the highest point price between 2011 and 2016. (again.. I have to point out that no one on this planet can do this). We have earned ourselves a handsome $79,138 with 2014 and 2015 showing the most gains, that's earning almost 80% over 5 years! The Realistic Sell at 10% gain (or max sell if 10% isn't met) This is probably the closest to real home investors, once we reach a max gain of 10% we sell (or max sell if 10% isn't met). We made a profit of $8,210. You might be thinking that that's not much compared to the ~80% perfect sell, but the important thing to note here is we made $8,210 in around 1-3 months. Stock Industries Over Time This one shows us the gains made via industry. Now before we get too far ahead of ourselves Jim Cramer may have suggested more of a particular industry which shows far more swing in a particular area. We'll clarify that below. (Remember this is the same as the very first graph, it assumes we never sell a share). Average Percent Gain By Industry We are getting closer to what the numbers reflect here, be aware that those two heavy hitters (Entertainment and Travel) only contain 1 company in those sectors, Cramer did a great pick on them but it's not really fair to include in a "by Industry" analysis. Average Earned by Count Of Companies in Industry The above scenario is based on "The Perfect" sell. This graph sheds more light on what I was previously talking about above, Travel and Entertainment showing massive earning increases, but there is only 1 company in each of those categories. Jim Cramer has numerous sections in his show where he talks about diversification, violent percent swings in either direction are mainly based around the fact we have a smaller amount of companies in those industries. Segment Specials This graph shows the average sell price for a share over time split by "Specials". A recommendation with a special of 1 means it was a special and 0 was on a lightening round. A segment "Special" is one that Cramer may interview a CEO or just plain old informs us what he feels is a buy and wants to let us in on it. It's interesting to note that Jims "Specials" are really propping up the figures with non-special recommendations falling flat and lower in 2014. Compare to the S&P and DOW Below are the graphs for the S&P and the DOW over the same period. S&P DOW What are the S&P or DOW? To quote google:

S&P "The Standard & Poor's 500, often abbreviated as the S&P 500, or just "the S&P", is an American stock market index based on the market capitalizations of 500 large companies having common stock listed on the NYSE or NASDAQ. The S&P 500 index components and their weightings are determined by S&P Dow Jones Indices." DOW The Dow Jones Industrial Average (DJIA) is a price-weighted average of 30 significant stocks traded on the New York Stock Exchange and the Nasdaq. The DJIA was invented by Charles Dow back in 1896. Why are these relevant? Well if you have been following the stock market since 2009, there has been some massive investment, we want to make sure that what Jim is saying isn't just riding off of the coat-tails of a massive stock climb. FINISH! That's it folks! I had fun looking throughout the data, less so collecting and cleaning. I'm kinda tempted to cut more time segments for further analysis, especially since the market is in a little bit of turmoil in 2016, but there is a baby on the way and there is much to do!

0 Comments

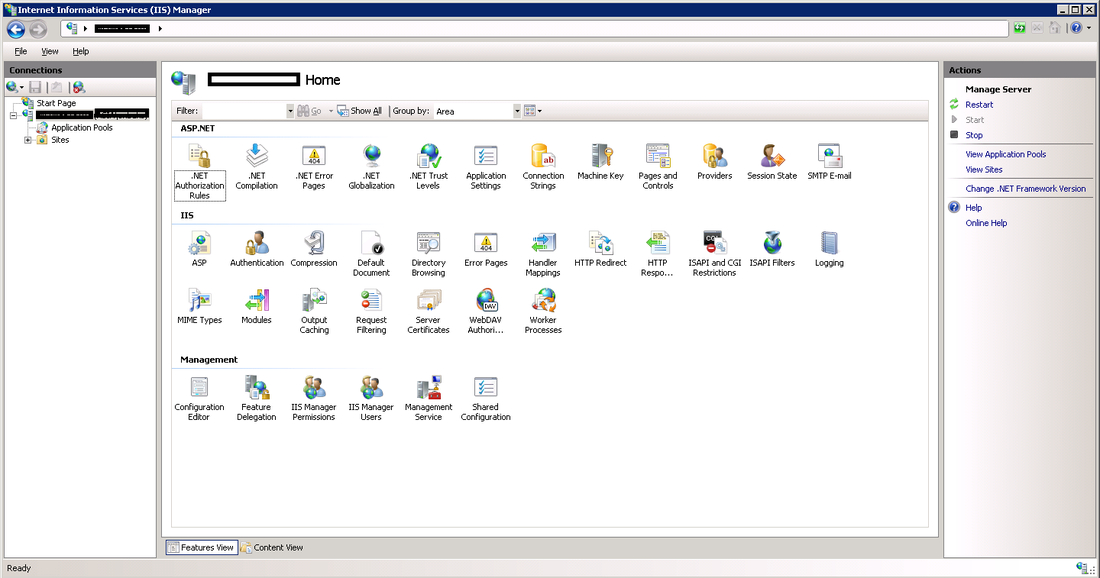

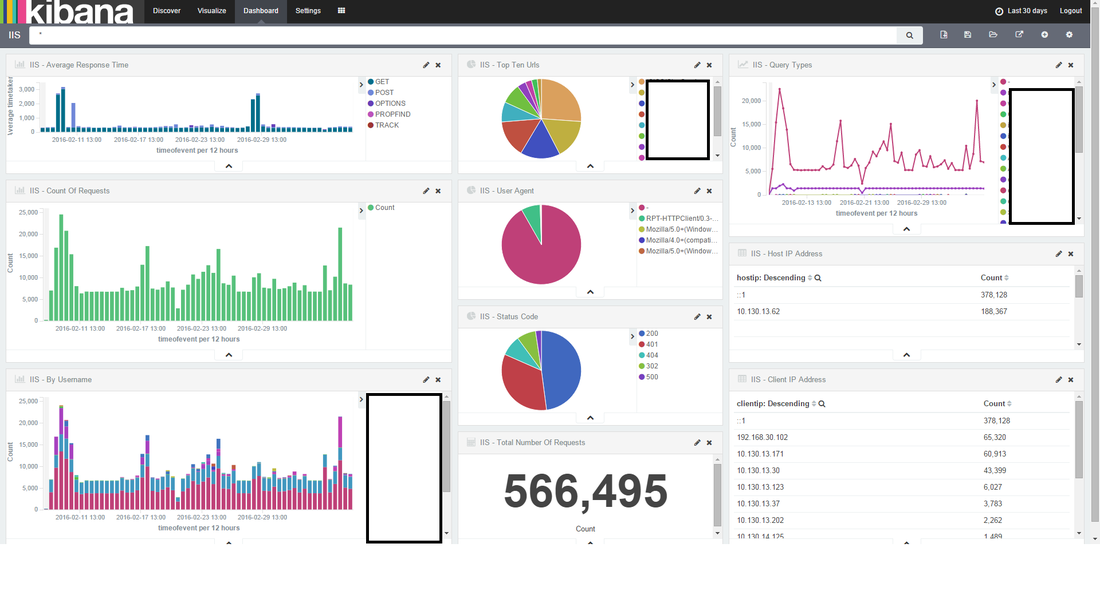

I'm going to cover how to implement IIS web logs into ElasticSearch via Logstash and nxlog. There are a lot of ways to do this, but in short we need to implement what's called a "log shipper" on a windows server to fire events through to logstash. I see a lot of people online using an install of logstash on each machine, I try and avoid this as logstash is quite 'heavy' in terms of system resource (I have seen many cases where it uses up to 4gb of memory just to send logs). I'm going be using the following versions of Elastic stuff for this post:

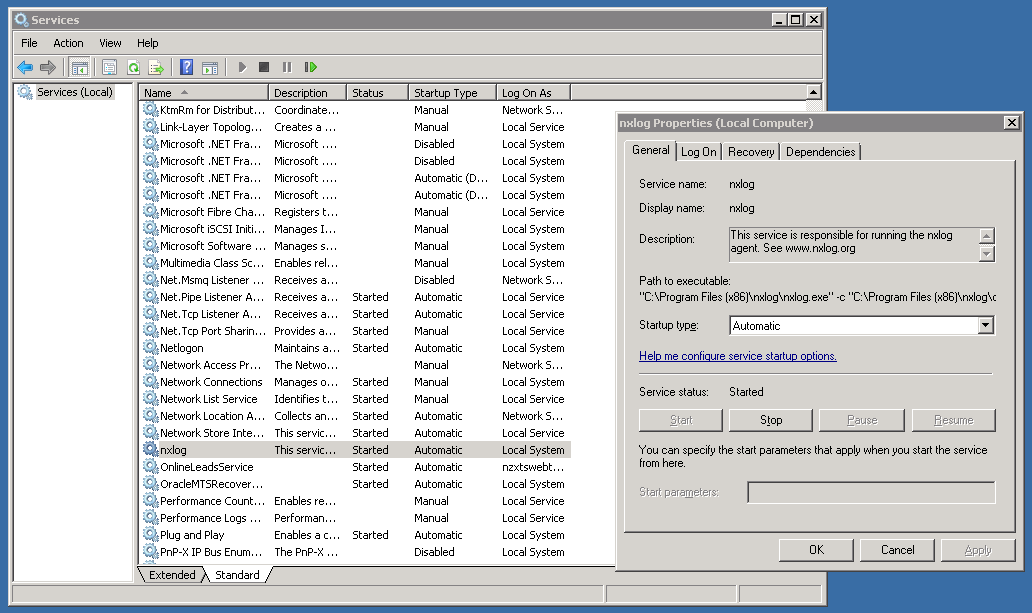

Setting up Nxlog First you need to go ahead and download the executable from nxlogs website. The community edition will be all that you need. Nxlog installs in "C:\Program Files (x86)\nxlog" by default. There are two folders that you will become familiar with:

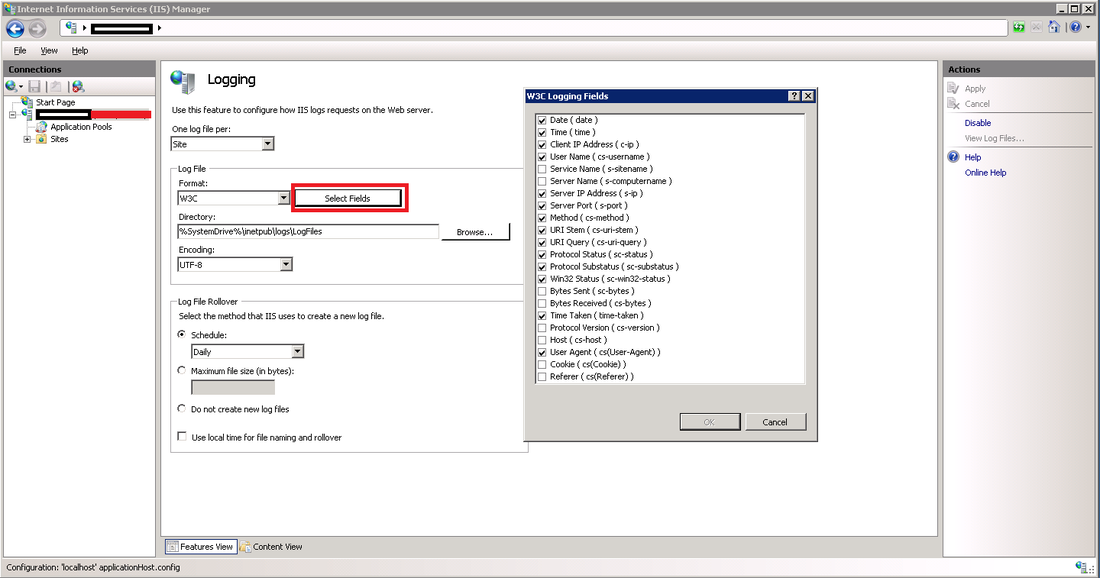

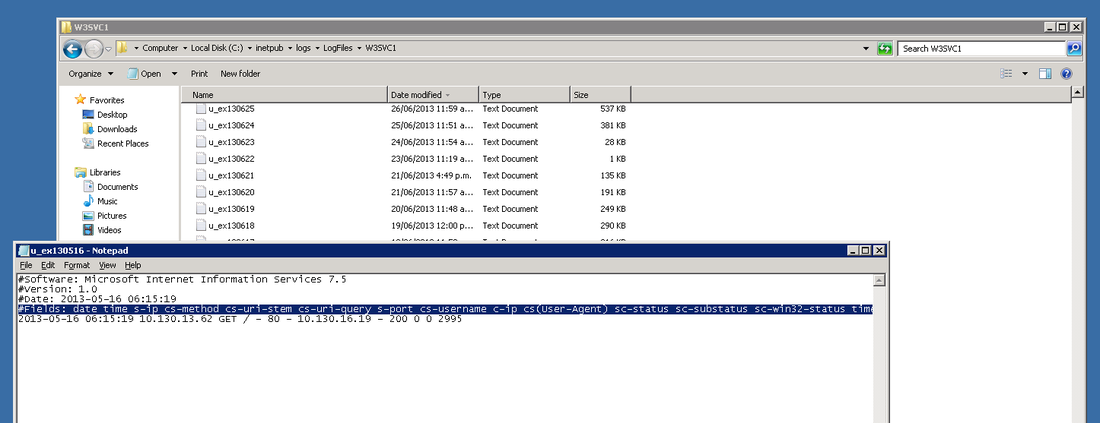

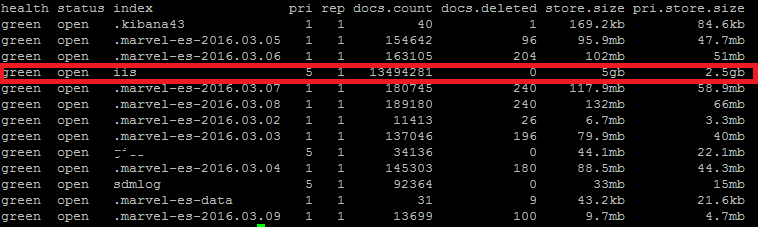

In the conf directory, backup the conf file by copy and pasting, rename to something like "nxlog_backup". In the original conf file remove any default log confs and add the following lines for IIS: <Input iis_1> Module im_file File "C:\inetpub\logs\LogFiles\W3SVC1\u_ex*.log" ReadFromLast False SavePos False Exec if $raw_event =~ /^#/ drop(); </Input> <Output out_iis> Module om_tcp Host put.logstash.ip.address.here Port 3516 OutputType LineBased </Output> <Route 1> Path iis_1 => out_iis </Route> Two bits in BOLD need to be altered: "File" needs to be the location of where IIS is throwing logs to, and "Host" needs to be the IP address of your logstash server. We're not quite ready to start nxlog yet, but that's the windows side mainly done (fairly easy huh). Setup a Config in Logstash Below is my config for logstash, you will notice the GROK pattern is based on how IIS has configured the logs (we will get onto that soon) ##### LOGSTASH CONFIG ##### input { tcp { type => "iis" port => 3516 host => "your.logstash.host.ip.here" } } filter { if [type] == "iis" { grok { match => {"message" => "%{TIMESTAMP_ISO8601:timeofevent} %{IPORHOST:hostip} %{WORD:method} %{URIPATH:page} %{NOTSPACE:query} %{NUMBER:port} %{NOTSPACE:username} %{IPORHOST:clientip} %{NOTSPACE:useragent} %{NUMBER:status} %{NUMBER:response} %{NUMBER:win32status} %{NUMBER:timetaken}"} } } } output { elasticsearch { user => "user" password => "password" hosts => ["elastic.node.1","elastic.node.2","elastic.node.3"] index => "iis" document_type => "main" } } A few things you're going to need to alter: Change the logstash.host.ip.here with your logstash host ip. You also may notice that I have a user and password in my output section, that's because I have shield enabled on the cluster, if you don't use shield just remove those entries. Another important thing to note is the GROK pattern I'm using, if you copy and paste my example you might have a bad time due to your configuration of logstash having more or less log attributes. You can find out your IIS log config by going into your windows server, opening "Internet Information Services" and select the "logging" icon. Once you have selected the logging option, click the "Select Fields" button, this shows us what IIS has configured for web-server logs. Alternatively you can see the order and fields included in each log file at the top of each IIS log. Check the screen shot below. ELASTIC SEARCH INDEX N STUFF Before you fire up logstash, you will need to have an index to write to, first you need to create the index, in my case I have called it "iis": curl -XPUT 'http://your.elastic.server.ip:9200/iis/' I have created a fairly simplistic mapping to go with our index: curl -XPUT 'http://your.ip.address:9200/iis/_mapping/main' -d ' { "main": { "properties": { "timeofevent": {"type": "date", "format": "yyyy-MM-dd HH:mm:ss"}, "hostip": {"type": "string","index" : "not_analyzed"}, "method": {"type": "string","index" : "not_analyzed"}, "page": {"type": "string","index" : "not_analyzed"}, "query": {"type": "string","index" : "not_analyzed"}, "port": {"type": "integer"}, "username": {"type": "string","index" : "not_analyzed"}, "clientip": {"type": "string","index" : "not_analyzed"}, "useragent": {"type": "string","index" : "not_analyzed"}, "status": {"type": "integer"}, "response": {"type": "integer"}, "win32status": {"type": "integer"}, "timetaken": {"type": "integer"} } } }' FIRE IT UP! LOGSTASH Before you fire up logstash, test your newly created config file by running the following command: sudo /etc/init.d/logstash configtest If all passes, you can start up logstash by running: sudo /etc/init.d/logstash start Remember, if things start to go awol, you can check the logs for logstash by running the following command: sudo tail /var/log/logstash/logstash.log NXLOG You can startup nxlog through powershell or through the services manager. See if everything is working by checking the size of our index in Elastic by running: curl 'your.elastic.ip.address:9200/_cat/indices?v' That's it folks: If you're using Kibana you can make a real pretty dashboard to display what's happening in real time on your web-server (here's one below I made in about 20 minutes): |

AuthorNew Zealand big data nerd, facial hair sculptor and classic car fanatic. Owner of needles.io, freelance big data consultant, ex Activision. Archives

April 2016

Categories |

RSS Feed

RSS Feed